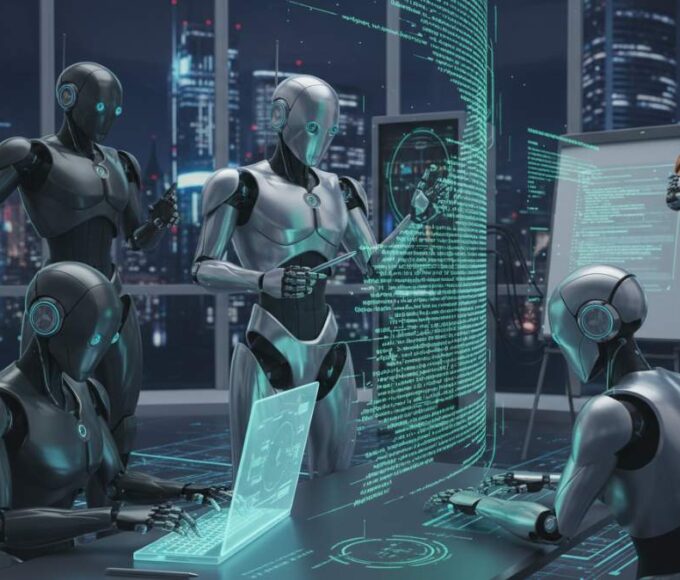

Google has introduced a new artificial intelligence model called Gemma 4, expanding its work on systems that can process different types of information.

The model was announced on 2 April and is designed to handle both text and image inputs while producing text responses. Some versions also support audio tasks such as speech recognition and translation.

Gemma 4 is released with open weights, allowing developers to modify and adapt the model for their own projects. Google says this approach makes it easier to use the technology across different platforms and devices.

The model comes in four sizes, designed for different levels of computing power. Smaller versions can run on laptops and mobile devices, while larger versions are built for more demanding tasks on high-performance systems.

Gemma 4 also features a large context window of up to 256,000 tokens. This allows the system to process long documents or conversations in a single interaction.

Google says the model focuses strongly on reasoning and coding tasks. It can generate, complete and correct code, and it also supports function calling for structured interactions.

Under the system’s design, Gemma 4 uses a combination of dense computing and mixture-of-experts architecture. The company says this helps balance performance and efficiency, especially when handling long inputs.

The release reflects a broader move toward multimodal AI systems that can understand text, images and audio together. Google says tools like Gemma 4 are intended to work across many real-world applications rather than a single task.